Some Reasons Why Deep Learning has a Bright Future

Would you like to see the future? This post aims at predicting what will happen to the field of Deep Learning. Scroll on.

Microprocessor Trends

Who doesn’t like to see the real cause of trends?

“Get Twice the Power at a Constant Price Every 18 months”

Some people have said that Moore’s Law was coming to an end. A version of this law is that every 18 months, computers have 2x the computing power than before, at a constant price. However, as seen on the chart, it seems like improvements in computing got to a halt between 2000 and 2010.

For Instance, See Moore’s Law Graph.

But the Growth Stalled…

This halt is in fact that we’re reaching the limit size of the transistors, an essential part of CPUs. Making them smaller than this limit size will introduce computing errors, because of quantic behavior. Quantum computing will be a good thing, however, it won’t replace the function of classical computers as we know them today.

Faith isn’t lost: invest in parallel computing

Moore’s Law isn’t broken yet on another aspect: the number of transistors we can stack in parallel. This means that we can still have a speedup of computing when doing parallel processing. In simpler words: having more cores. GPUs are growing towards this direction: it’s fairly common to see GPUs with 2000 cores in the computing world, already.

That means Deep Learning is a good bet

Luckily for Deep Learning, it comprises matrix multiplications. This means that deep learning algorithms can be massively parallelized, and will profit from future improvements from what remains of Moore’s Law.

See also: Awesome Deep Learning Resources

The AI Singularity in 2029

A prediction by Ray Kurtzweil

Ray Kurtzweil predicts that the singularity will happen in 2029. That is, as he defines it, the moment when a 1000$ computer may contain as much computing power as 1000x the human brain has. He is confident that this will happen, and he insists that what needs to be worked on to reach true singularity is better algorithms.

“We’re limited by the algorithms we use”

So we’d be mostly limited by not having found the best mathematical formulas yet. Until then, for learning to properly take place using deep learning, one needs to feed a lot of data to deep learning algorithms.

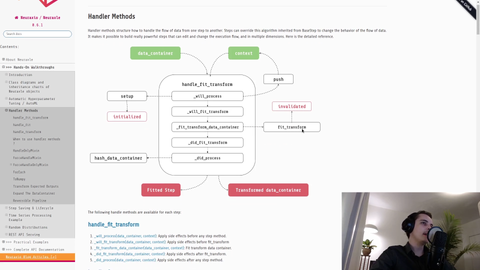

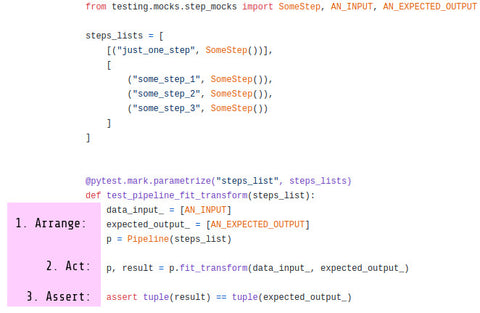

We, at Neuraxio, predict that Deep Learning algorithms built for time series processing will be something very good to build upon to get closer to where the future of deep learning is headed.

Big Data and AI

Yes, this keyword is so 2014. It still holds relevant.

“90% of existing data was created in the last 2 years”

It is reported by IBM New Vantage that 90% of the financial data was accumulated in the past 2 years. That’s a lot. At this rate of growth, we’ll be able to feed deep learning algorithms abundantly, more and more.

“By 2020, 37% of the information will have a potential for analysis”

That is what The Guardian reports, according to big data statistics from IDC. In contrast, only 0.5% of all data was analyzed in 2012, according to the same source. Information is more and more structured, and organizations are now more conscious of tools to analyze their data. This means that deep learning algorithms will soon have access to the data more easily, whether the data is stored locally or in the cloud.

It’s about intelligence.

It is about what defines us, humans, compared to all previous species: our intelligence.

The key to intelligence and cognition is a very interesting subject to explore and is not yet well understood. Technologies related to this field are promising, and simply, interesting. Many are driven by passion.

On top of that, deep learning algorithms may use Quantum Computing and will apply to machine-brain interfaces in the future. Trend stacking at its finest: a recipe for success is to align as many stars as possible while working on practical matters.

What will Deep Learning become in 10 years?

We predict that deep learning in 10 years may be more about Spiking Neural Networks (SNNs).

Those types of artificial neural networks may unlock the limitations of Deep Learning, as they are closer to natural neurons, although they require more (parallelizable) computing power. If you’re interested in learning more on that topic, see my other article on the limits and future of research in deep learning and my other article on Spiking Neural Networks (SNNs).

Although I did some researched on SNNs, it’s a far shot and they aren’t useful yet. For now, regular Artificial Neural Networks, such as LSTMs, are good for solving a plethora of tasks. Until we reach the point where SNNs will be useful, it’s very practical to have and use the good tools to do deep learning when it comes to deploying deep learning production pipelines, such as using a good machine learning framework in Python to correctly integrate deep learning algorithms within computing environments.

Conclusion

First, Moore’s Law and computing trends indicate that more and more things will be parallelized. Deep Learning will exploit that.

Second, the AI singularity is predicted to happen in 2029 according to Ray Kurtzweil. Advancing Deep Learning research is a way to get there to reap the rewards and do good.

Third, data doesn’t sleep. More and more data is accumulated every day. Deep Learning will exploit big data.

Finally, deep learning is about intelligence. It is about technology, it is about the brain, it is about learning, it is about what defines us, humans, compared to all previous species: our intelligence. Curious people will know their way around deep learning.

If you liked this article, consider following us for more!